Modern Approaches to Unified Batch & Stream Processing

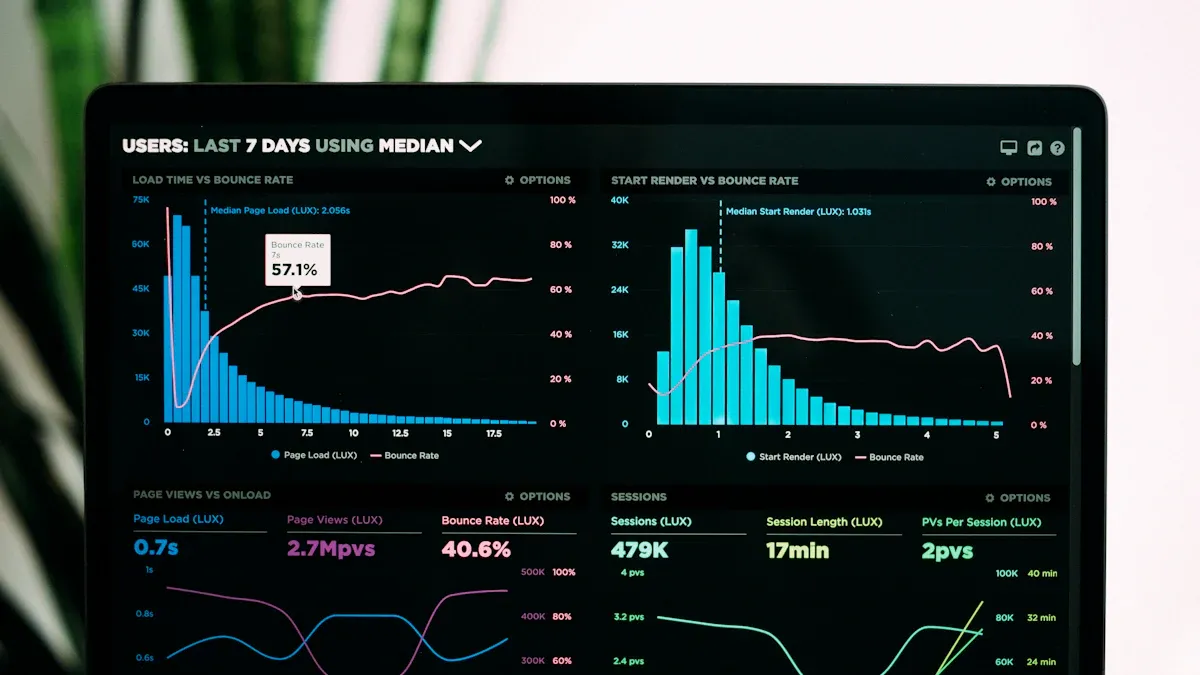

Unified batch and stream processing lets you handle both historical and real-time data in one system. This seamless approach gives you instant insights and helps you make quick decisions. With unified platforms, you see live dashboards, respond to problems fast, and adapt to market changes with ease.

Criteria | Traditional Analytics Platforms | Unified Data Analytics Platforms (UDAPs) |

|---|---|---|

User Experience | Complex, requires IT involvement | Simplified, intuitive interface |

Speed of Results | Batch processing delays | Near-instant results |

Accessibility of Insights | Limited to IT or data teams | Accessible to all users |

Agility in Decision-Making | Slower due to processing times | Faster, enabling agile responses |

Modern Approaches unify your data journey and boost business agility.

Key Takeaways

Unified batch and stream processing allows you to analyze both historical and real-time data in one system, saving time and improving decision-making.

Using unified platforms leads to faster insights and better data accessibility for all users, not just IT teams.

Modern architectures like Lambda and Kappa simplify data management by integrating batch and stream processing, reducing complexity and costs.

Implementing best practices, such as agile project management and fostering collaboration, enhances the effectiveness of your data projects.

Real-time analytics and unified processing are essential for industries like finance and e-commerce, where quick decisions are crucial.

Unified Processing Explained

Core Concepts

Unified processing lets you work with both historical and real-time data in one system. You do not need to switch between separate tools or pipelines. This approach combines batch processing, which handles large amounts of past data, and stream processing, which deals with data as it arrives. You get the best of both worlds.

Here is a table that shows the main technical principles:

Principle | Batch Processing | Stream Processing |

|---|---|---|

Processes large volumes of historical data | Processes real-time data | |

Latency | Higher latency for accuracy | Lower latency for immediate insights |

Separate pipelines increase complexity | Unified architecture reduces complexity |

You benefit from a system that supports both batch and stream tasks. The infrastructure gives you generic functions, so you do not need to build everything from scratch. The application layer uses clear and descriptive phrases, making your work easier to understand.

Tip: Unified processing combines both batch and streaming data, so you can analyze trends and react to events at the same time.

Why It Matters

Unified processing changes how you handle data. You do not waste time switching between systems. You see results faster and make better decisions. Here is how unified processing compares to traditional methods:

Feature | Traditional Processing | Unified Processing |

|---|---|---|

Data Handling | Batch processing in chunks | Integrates batch and stream |

Latency | High due to scheduled intervals | Reduced latency for real-time |

Flexibility | Limited to batch or stream | Handles both seamlessly |

User Experience | Varies by architecture | Improved with unified frameworks |

You save time and money. Many organizations report a 3.2x return on investment in the first year. You can automate tasks and focus on important work. Teams finish projects faster, sometimes in less than two months. You also improve data quality and compliance, which helps with audits and teamwork. Modern Approaches to unified processing give you the flexibility to grow as your data needs change.

Batch vs. Stream Processing

Batch Overview

Batch processing lets you handle large amounts of data at once. You collect data over a period of time, then process it in chunks. This method works well for tasks like generating reports or analyzing historical trends. You do not need to worry about immediate results. Instead, you focus on accuracy and completeness.

Feature | Batch Processing |

|---|---|

Handling Data | Processes large amounts of data in chunks |

Time Sensitivity | Not time-sensitive, focuses on historical data |

Processing Flow | Processes data offline or at scheduled intervals |

Resource Allocation | Efficient use of resources during low activity |

Complex Calculations | Suitable for complex calculations and reports |

Batch systems often use traditional storage and run jobs when system activity is low. This approach helps you save resources and manage costs.

Stream Overview

Stream processing works differently. You process data as soon as it arrives. This method gives you real-time insights and lets you react quickly to new information. Stream processing is ideal for monitoring live events, tracking user activity, or detecting fraud.

Processes data in real-time as it arrives

Focuses on immediate data analysis

Runs continuously and incrementally

Needs dedicated resources for high-speed processing

Enables rapid response and immediate insights

You use specialized frameworks to handle streaming data. These systems need low latency and strong fault tolerance to keep up with the flow.

Key Limitations

Both batch and stream processing have challenges you should know:

Delayed outcomes: Batch jobs only give results after processing finishes, which can slow down your response.

Resource spikes: Running large batch jobs can strain your system and cause slowdowns.

Inflexibility: Changing batch workflows often takes a lot of effort.

Error propagation: Mistakes in batch jobs can affect all the data in that batch.

Higher upfront costs: Setting up batch systems can be expensive.

Increased complexity: Stream processing needs advanced tools and skills.

Higher operational costs: Real-time systems use more computing power.

Data accuracy challenges: Streams can have out-of-order events, making consistency hard.

Monitoring and maintenance: Streaming systems need constant attention.

Limited historical context: Streams focus on current data, so you may miss deeper trends.

Note: Understanding these differences helps you choose the right approach for your data needs. Sometimes, combining both methods gives you the best results.

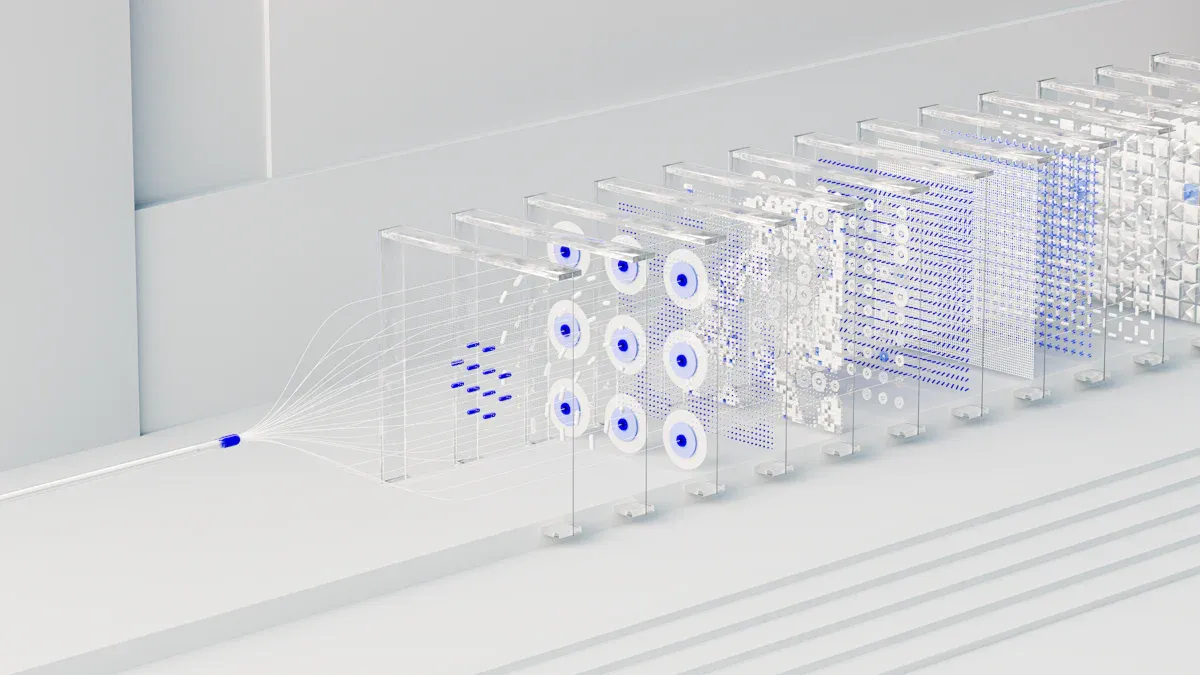

Modern Approaches to Unification

You can choose from several Modern Approaches when you want to unify batch and stream processing. The three main models are Lambda Architecture, Kappa Architecture, and the Stream-First Model. Each approach helps you handle both historical and real-time data, but they do so in different ways. Understanding these models will help you pick the best one for your needs.

Lambda Architecture

Lambda Architecture combines batch and stream processing to give you real-time insights and accurate results. You use two separate paths:

The batch layer (cold path) processes large amounts of historical data. This layer gives you accurate and complete results, but it takes more time and resources.

The stream layer (hot path) handles new data as it arrives. This layer gives you quick answers, but sometimes the results are less accurate than the batch layer.

You need to manage two different systems for storage and computation. This can make your setup more complex.

With Lambda Architecture, you get the benefit of both worlds. You see fast results from the stream layer and get reliable answers from the batch layer. Many organizations use this model when they need both speed and accuracy.

Tip: Lambda Architecture works well if you want to balance real-time insights with deep historical analysis.

Kappa Architecture

Kappa Architecture offers a simpler way to unify data processing. You use only one stream processing pipeline for both real-time and historical data. This means you do not need to build and maintain two separate systems.

Here is a table that compares Kappa and Lambda Architectures:

Feature | Kappa Architecture | Lambda Architecture |

|---|---|---|

Processing Model | Single stream processing pipeline | Dual-pipeline (batch and speed layers) |

Complexity | Simplified architecture with lower maintenance costs | More complex, requiring more resources and maintenance |

Historical Data Processing | Achieved through stream replay | Handled by the batch layer |

Real-time Data Processing | Integrated into the same pipeline | Managed by the speed layer |

Machine Learning Integration | Unified workflows for both historical and real-time | Separate processes for batch and real-time data |

You can replay data streams to process historical data. This makes your system easier to manage and reduces costs. Kappa Architecture fits well if you want to keep things simple and focus on real-time processing.

Stream-First Model

The Stream-First Model puts real-time data at the center of your system. You process data as soon as it arrives, which helps you keep your information fresh and up to date. This model supports both streaming and batch workloads, so you do not have to choose one over the other.

You get low data latency and query latency, which means you see results quickly.

Native connectors let you bring in data from many sources without extra work.

Materialized views update continuously, so your dashboards always show the latest numbers.

You can handle both live and historical data in one system.

This approach works well for Modern Approaches that need fast insights and flexible data handling. You can use the Stream-First Model to power live dashboards, monitor events, or run analytics on both new and old data.

Note: Stream processing can sometimes replace batch processing. If your system needs instant results, you may not need a separate batch layer. This change can make your design simpler and faster, but you must plan for data consistency and fault tolerance.

Modern Approaches like Lambda, Kappa, and Stream-First Models give you many options for unified data processing. You can choose the one that matches your goals, whether you want speed, accuracy, or simplicity.

Enabling Technologies

Modern unified batch and stream processing relies on powerful technologies. These tools help you manage data efficiently and gain real-time insights.

Apache Flink

Apache Flink gives you a unified API for both batch and streaming workloads. You can write one set of code and use it for different types of data processing. Flink uses advanced state management and event-time semantics. This means you can handle complex tasks and get accurate results, even with real-time data. Flink supports exactly-once processing, so you do not lose or duplicate data. Many companies use Flink for large-scale, low-latency applications. Flink’s performance in batch processing is good, but Spark often runs batch jobs faster. Flink also offers features like snapshot and incremental data ingestion, schema synchronization, and full database synchronization.

Framework | Key Features |

|---|---|

Apache Flink | Unified API for batch and streaming, supports same code for both types of processing. |

Apache Flink CDC | Snapshot and incremental data ingestion, schema synchronization, full database synchronization. |

Apache Paimon | Supports streaming and batch reads/writes, ACID transactions, time travel, schema evolution. |

Tip: Flink’s optimization strategies help you balance speed and accuracy for both stream and batch jobs.

Apache Kafka

Apache Kafka acts as the backbone for many real-time data pipelines. You can process and analyze data as soon as it is generated. Kafka Streams lets you transform and aggregate data on-the-fly. This helps you get more value from your data quickly. Kafka works well with microservices and cloud-native systems. You can build unified data pipelines that support both batch and streaming needs.

Apache Beam

Apache Beam gives you a single programming model for both batch and streaming data. You can write your pipeline once and run it on different engines, such as Spark or Flink. Beam supports multiple programming languages, so your team can use the tools they know best.

Feature | Description |

|---|---|

Unified Batch and Streaming | Apache Beam allows a single model to handle both batch and streaming data, simplifying development. |

Portability | It is platform-agnostic, enabling pipelines to run on various supported engines without concern for infrastructure. |

Flexible SDK Support | Offers SDKs in multiple programming languages, allowing teams to use the languages that best fit their needs. |

Other Tools

You can also use tools like Apache Paimon for ACID transactions, time travel, and schema evolution. These features help you keep your data accurate and flexible. Many organizations combine these technologies to build robust, unified data platforms.

Note: Choosing the right tools depends on your data needs, team skills, and business goals.

Implementation Strategies

Scalability & Consistency

You need to plan for growth when you build a unified batch and stream processing system. Many teams face challenges like network bandwidth limits, old systems, and security risks. You can see common challenges and solutions in the table below:

Challenge | Solution |

|---|---|

Network bandwidth constraints | Plan and check your network to make sure it can handle more data. |

Integration with legacy systems | Use technical strategies that connect old and new systems smoothly. |

Security concerns | Set up strong security rules from the start. |

Scalability and performance | Watch your system and tune it to fix slow spots. |

You should also focus on consistency. Use a central data platform to manage all your data activities. This helps you keep your data accurate and easy to control.

Integration Steps

You can follow a few steps to add unified processing to your current data setup:

Support both batch and streaming data sources. Your system should handle data that comes in all at once and data that arrives bit by bit.

Use Change Data Capture (CDC) to keep your data lake in sync with other systems.

Allow for both low-latency streaming and high-throughput batch jobs. This gives you flexibility in how you read data.

Make sure your compute engine can process both real-time and batch data with the same tools.

Manage state well in both streaming and batch jobs.

Keep event-time processing accurate for all data types.

Support exactly-once processing to avoid errors.

Use ACID transactions to keep updates safe and reliable.

These steps help you use Modern Approaches in your data projects.

Data Quality

You want your data to be correct and trustworthy. You can use these best practices:

Best Practice | Description |

|---|---|

Central Data Platform | Use one system to manage all your data for better control and consistency. |

Centralized Governance | Set rules early to keep your data safe and follow laws. |

Automation for Quality | Use automated tools to check and fix data problems. |

Data Version Control | Save each version of your data so you can track changes over time. |

You can build a strong foundation for your data by following these steps. This will help you get the most out of your unified processing system.

Real-World Use Cases

Financial Services

You can see the power of unified batch and stream processing in financial services. Banks and payment companies use these systems to protect your money and keep transactions safe.

You get real-time tracking of your transactions. This helps stop credit card fraud by sending alerts when something unusual happens.

You benefit from immediate identification and flagging of suspicious activities. Real-time monitoring helps banks act fast and keep your account secure.

When you use your card, the system checks for problems right away. This keeps your finances safer and gives you peace of mind.

E-commerce

Unified processing changes how you shop online. You experience smooth and personalized service because the system connects backend operations with what you see on the website. Your orders, inventory, and recommendations update instantly. This makes shopping easier and more enjoyable for you.

Technical Advantage | Description |

|---|---|

Centralized integration | The platform brings together all applications and data sources, so you never face data silos. |

Real-time data synchronization | Your actions update across all channels right away, so you always see the latest information. |

Automated workflows | The system handles order processing and inventory updates automatically, saving time and effort. |

You get faster service and fewer mistakes. The store can focus on giving you a better experience.

IoT Applications

Unified processing helps you manage devices and sensors in the Internet of Things (IoT). You need systems that scale and respond quickly. Edge computing lets your devices process data locally, which means faster decisions and less waiting.

Benefit | Description |

|---|---|

Scalability Solutions | Edge computing spreads the work across many local nodes, making it easier to grow your IoT network. |

You get low-latency processing, which is key for things like smart grids and self-driving cars. | |

Reduced Latency | Local processing cuts down on delays and saves bandwidth by filtering data before sending it to the cloud. |

Edge computing boosts real-time decision-making for critical systems.

You see faster responses, which helps keep your operations running smoothly.

With unified processing, you can trust your IoT systems to scale and react in real time.

Challenges & Solutions

Latency & Throughput

You want your data system to be fast and reliable. Unified batch and stream processing faces several challenges that can slow things down or make results less accurate. Here is a table that shows some of the most common issues:

Challenge | Description |

|---|---|

Efficient State Management | The compute engine must manage the state efficiently in both streaming and batch contexts. |

Event-Time Semantics | Processing data based on the event time is crucial for maintaining accuracy in both contexts. |

Exactly-Once Processing Guarantees | Ensures data consistency across both real-time and batch pipelines. |

Managing Separate Infrastructures | Companies often manage two separate infrastructures for data ingestion, storage, and processing. |

Data Duplication | The same data may need to be ingested and stored differently for batch and streaming pipelines. |

Increased Operational Overhead | Maintaining two technology stacks leads to higher operational overhead for teams. |

You can solve these problems by choosing platforms that support both batch and stream processing in one system. This reduces duplication and helps you keep your data accurate and up to date.

State Management

State management keeps track of information as your data flows through the system. You may face problems like:

Eventual consistency issues. Different services might show different results at the same time, which makes it hard to trust real-time information.

Orchestration complexity. As your workflows grow, you need to understand both the business steps and the technical details.

Failure identification. When something goes wrong, you must know how each step connects to find the problem quickly.

Modern platforms help you by offering tools that track state, manage workflows, and alert you to errors. You get better control and more reliable results.

Operational Complexity

Running unified systems at scale brings its own set of challenges. You often need to manage two separate infrastructures for data ingestion, storage, and processing. This can lead to data duplication and higher operational overhead. Here is a summary:

Complexity Type | Description |

|---|---|

Separate Infrastructures | Companies manage two distinct infrastructures for data ingestion, storage, and processing. |

Data Duplication | The same data is often ingested and stored differently for batch and streaming pipelines. |

Increased Operational Overhead | Maintaining two technology stacks leads to higher operational overhead and complexity. |

You can reduce complexity by consolidating your tools and automating routine tasks. This helps your team focus on delivering value instead of managing systems.

Future Trends & Best Practices

Evolving Technologies

You will see rapid changes in unified batch and stream processing. New advancements help you manage data more easily and get faster results. Many companies now use systems that handle both batch and streaming data together. This shift reduces complexity and gives you real-time analytics at a larger scale.

Here is a table showing some of the latest advancements:

Advancement Type | Description |

|---|---|

Unification of Batch and Streaming | You can manage both workloads in one system, making your work simpler and faster. |

AI-Powered Stream Processing | AI helps you spot problems quickly and optimize your data flows without manual work. |

Edge Computing | You process data closer to where it is created, which cuts down on delays and supports real-time apps. |

Emerging Platforms | New tools like Condense, Confluent Cloud, and Redpanda give you more power and flexibility. |

Unified Storage Layer | You get strong data safety and can search both real-time and old data with ease. |

You will notice more AI features in stream processing. These features help you find issues and improve performance automatically. Edge computing also grows in importance. It lets you process data right where it starts, which means less waiting and faster decisions. New platforms and storage layers make it easier for you to keep your data safe and easy to use.

Tip: Watch for new tools and AI features that can help you get more from your data.

Actionable Practices

You can follow some best practices to get the most value from unified processing. These steps help you work better and show the impact of your data projects.

Implement Agile Project Management: Use methods like Scrum or Kanban. These help your team respond quickly to changes and business needs.

Measure IT’s Impact on Business Outcomes: Set clear goals and track your progress. Share results with your team and leaders so everyone sees the value.

Foster a Culture of Collaboration: Bring together people from different teams. When you work together, you solve problems faster and reach your goals.

Note: When you use these practices, you help your team stay focused, work together, and show real results from your data projects.

Unified batch and stream processing gives you real-time insights and helps your business grow. You should check your current workflows for warning signs, like quotes created outside your CRM or approvals managed in emails.

Warning Sign | Impact Level | Action Required |

|---|---|---|

Quotes created outside CRM systems | High | Immediate workflow integration needed |

Approval processes via email/spreadsheets | High | Embedded approval workflows required |

Service teams lack deal context post-close | Medium | Quote-to-service integration essential |

You can find learning resources, such as tutorials and community support, to keep your skills sharp. Real-time analytics, IoT growth, and cloud platforms make unified processing a smart choice for your future.

FAQ

What is unified batch and stream processing?

Unified batch and stream processing lets you handle both old and new data in one system. You can analyze past trends and react to live events without switching tools.

Why should you use a unified approach?

You save time and reduce errors. You do not need to manage two separate systems. This approach helps you get real-time insights and make better decisions faster.

Can you switch from batch to stream processing easily?

You can move to unified processing step by step. Many tools support both methods. You start with your current setup and add streaming features as you grow.

What are common challenges with unified processing?

You may face issues like data consistency, system complexity, and high resource use. You solve these by choosing the right tools and following best practices.

Which industries benefit most from unified processing?

You see big benefits in finance, e-commerce, and IoT. These fields need fast decisions and real-time data. Unified processing helps you stay ahead in these industries.

See Also

Enhancing Streaming Data Processing With Apache Kafka's Efficiency

An Introductory Guide to Understanding Data Pipelines

Strategies for Effective Analysis of Large Datasets