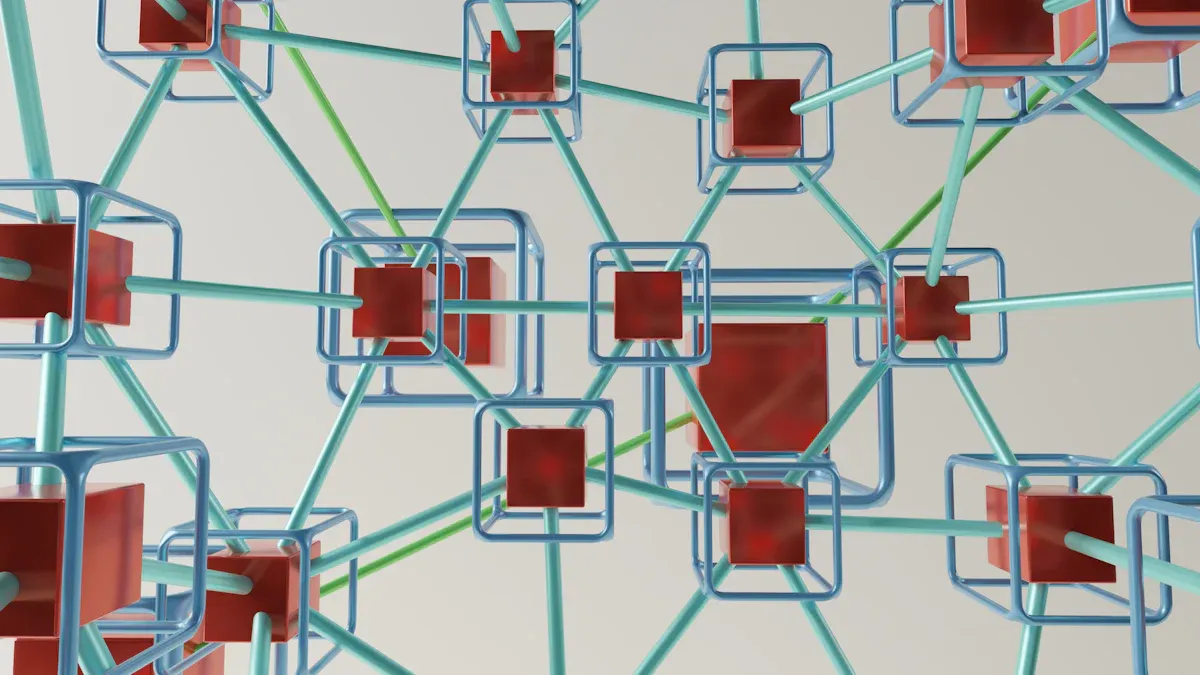

A Practical Guide to Building Data Pipelines Using Bronze, Silver, and Gold Layers

You can use Bronze, Silver, and Gold layers to sort Data Pipelines in a simple way. The Medallion Architecture cleans raw data step by step. It helps you go from messy data sources to one trusted system. Companies say they get better results when they use this method:

Description | |

|---|---|

Data Quality | Organized checks make data more correct. |

Scalability | Layers help the system grow easily. |

Usability | One main source makes it easy to find data. |

Good data helps you make better choices and react faster to changes.

Key Takeaways

The Bronze layer keeps raw data as it is. This lets you have a backup for later checks.

The Silver layer fixes and sorts the data. It makes sure the data is correct and ready to study.

The Gold layer gives you great data for business use. It helps with reports and smart choices.

Layered data pipelines make data better and easier to handle. Each layer does its own job.

Pick the right layer for what you need. This saves time and stops errors.

Bronze, Silver, Gold Layers in Data Pipelines

Layer Definitions

Bronze, Silver, and Gold layers are steps to organize data. Each layer has its own job and special features. The table below explains what each layer does in most Data Pipelines:

Layer | Purpose | Characteristics | Use Cases |

|---|---|---|---|

Bronze | Entry point for raw, unprocessed data. | Data mirrors source structure; supports batch and streaming; focuses on auditability. | Analysts assess data quality; reprocessing data; maintaining historical archives. |

Silver | Processes data for cleansing and validation, creating an enterprise view. | Data is filtered and deduplicated; follows ELT methodology; stored in optimized formats. | Data specialists train machine learning models; exploratory data analysis. |

Gold | Contains curated, consumption-ready data for specific business use cases. | Data is transformed and enriched; optimized for performance with fewer joins. | Business intelligence dashboards; predictive modeling; customer segmentation. |

First, the Bronze layer takes in raw data and keeps it unchanged. Next is the Silver layer. Here, you clean and check the data so it is better. The Gold layer is last. This layer gives you high-quality data for reports and analytics.

Why Use Layered Data Pipelines

Layered Data Pipelines fix many problems found in old systems. Using layers stops systems from getting messy and cuts down on extra coding. This makes your work simpler and helps avoid mistakes. The table below lists common problems and how layers help:

Challenge | Description |

|---|---|

Complexity | Traditional pipelines create a complex web of siloed systems, increasing maintenance challenges. |

Manual Coding | Requires extensive custom coding for each pipeline, leading to inefficiencies and errors. |

Fragility | Tightly coupled components mean failures can halt the entire process, making them prone to errors. |

Accumulated Technical Debt | Custom solutions lead to hidden costs and complexities, hindering agility and adaptability. |

Resource-Intensive Maintenance | Ongoing maintenance is costly and time-consuming, requiring significant reworking as needs change. |

💡 Using layers makes Data Pipelines easier to handle and more stable. You can fix issues quickly and keep data neat.

Benefits of Structured Data

Structured data in layers gives you many good things. You can work with data faster and make fewer mistakes. Developers can spot patterns more easily. You can finish checks in minutes, not hours. The table below shows some main benefits:

Benefit | Description |

|---|---|

Developer Productivity | Measurable improvements in productivity due to AI agents quickly identifying patterns. |

Investigation Time | Investigations that previously took hours can now be completed in minutes. |

Data Processing Efficiency | Enhanced efficiency in data processing reduces the time for analysis and increases accuracy. |

You also get safer changes, easier problem-solving, and more ways to use data. Layered pipelines help you follow rules and keep data safe. You can see every change, use the same rules everywhere, and protect private data before people see it.

🛡️ With structured data, you can check every step and follow rules the same way. This keeps you safe and lowers the chance of data leaks.

Medallion Architecture Overview

Design Principles

The Medallion Architecture helps organize lots of data. It makes messy data useful by using steps. There are three layers: Bronze, Silver, and Gold. Each layer does something important.

Layer | Description |

|---|---|

Bronze Layer | Keeps data as it comes in, both batch and streaming, so you can use old data again. |

Silver Layer | Has clean and matched data, ready for different jobs, and can show current or past data. |

Gold Layer | Data is ready for business needs, made for reports, and is high quality and easy to use. |

You do not need strict rules. The Medallion Architecture can change to fit what you want. The main goal is to make data better as it moves up. This setup keeps your data neat and simple to handle.

💡 The Medallion Architecture lets you build Data Pipelines that grow with your business. You can add new sources or change rules without breaking the whole system.

Role in Modern Data Engineering

Today, there are many problems with data. You have more data, and you need to keep it safe and correct. The Medallion Architecture helps fix these problems. It gives you a clear way to go from raw data to useful answers.

Each layer works alone, so you can make it bigger when needed.

You only work with new or changed data, which saves time and money.

The system remembers every change, so you can fix mistakes.

You can use cloud tools like Azure and Delta Lake for more options.

As data goes from Bronze to Silver, you clean it and check it. You take out repeats and fix mistakes. When data gets to Gold, it is ready for reports and business choices. Automation helps move data through each step, making things faster and easier.

🚀 The Medallion Architecture turns raw data into valuable insights. You can trust your data and make better choices for your business.

Bronze Layer Explained

Raw Data Ingestion

When you build a data pipeline, you begin with the Bronze layer. This layer gathers raw data from many places. You do not change the data here. The data stays almost the same as when it arrived. This helps you know where the data came from. It also lets you fix problems later if you need to.

There are different ways to bring data into the Bronze layer. Here are some common ways:

Data Ingestion Method | Description |

|---|---|

Batch Data Ingestion | Periodic data dumps from transactional databases. |

Real-time Streaming Data Ingestion | Continuous data streams, such as user clicks and IoT events. |

API-based Data Ingestion | Fetching external data via web APIs. |

Batch data ingestion is good for moving lots of data at certain times. Real-time streaming helps you catch events as they happen. API-based ingestion lets you get data from outside sources when you want.

📝 Tip: If you keep raw data in the Bronze layer, you have a backup. You can always check the original files if you need to.

Common Sources

The Bronze layer can collect data from many places. Some examples are:

Transactional databases from business systems

Logs from web servers or apps

Sensor data from IoT devices

Files from cloud storage or FTP servers

Data from third-party APIs

Each source has its own format and structure. The Bronze layer lets you store all this data in one spot, even if it looks different.

Use Cases

The Bronze layer helps with many important jobs in Data Pipelines:

Storing old records for audits or fixing problems

Reprocessing data if you find mistakes later

Testing new rules without changing the original data

Keeping a backup in case you lose information

The Bronze layer helps you keep track of your data. It is the base for all the next steps in your pipeline.

Silver Layer Explained

Data Validation

The Silver layer helps you check if your data is right. You use this step to find mistakes early. You make rules to see if numbers are in the right range. You also check if dates look correct. You can run tests to see if your changes work. For example, you might check if sales totals are correct. You count rows and look for missing values. These checks help you trust your data and make fewer mistakes.

Technique | Description |

|---|---|

Implement checks to catch errors early, such as verifying data types and ranges. | |

Automated Testing | Develop tests to ensure transformations meet expected outcomes, like verifying total sales. |

Quality Metrics | Monitor metrics like row counts and null value percentages to track data quality over time. |

✅ Tip: If you check your data often, you can find problems before they show up in reports.

Transformation Steps

You make your data better in the Silver layer by cleaning it. You take out any records that are the same. You fill in missing spots so your data is complete. You change raw data into the right type, like turning words into numbers. You put information into columns and tables. This makes it easier to study. You also join batches of data to make one big set for your team.

Transformation Step | Impact on Data Quality |

|---|---|

Ensures data consistency and accuracy | |

Populating missing values | Improves completeness of the dataset |

Structuring data into columns and tables | Enhances usability for analysis |

Converting raw data into appropriate types | Standardizes data for better processing |

Consolidating individual batches | Creates a unified dataset for analysis |

🛠️ Note: Clean and neat data helps you get answers faster. It also makes your Data Pipelines work better.

Use Cases

You use the Silver layer for many jobs in business and analytics. This layer brings together data from different places. It uses business rules to make the data better. You clean and join tables so you can study the data more. For example, you might fix taxi ride data by removing repeats and filling in missing numbers. Many people use Silver layer data, like engineers, scientists, and analysts.

Aspect | Description |

|---|---|

Purpose | To clean, transform, and enrich data for better quality and usability. |

Description | Connects data from various sources using business logic, processes data from the bronze layer. |

Inclusions | Cleansed data, joined tables, and datasets with basic transformations applied. |

Example | Cleaning taxi ride data by removing duplicates and filling in missing passenger counts. |

Who can work with it | Data engineers, data scientists, ML engineers, and data analysts can utilize the refined data. |

💡 The Silver layer gives you good data for reports, models, and smart choices.

Gold Layer Explained

Data Enrichment

The Gold layer is where your data is ready to use. You start with the best data from the Silver layer. Here, you make the data even more helpful. You add business meaning and extra details. You change numbers into KPIs and other important metrics. The Gold layer keeps data that is picked and processed a lot. You set up this layer to match what your business needs. This helps you answer real questions with your data. You also use models that are easy to read and quick to use.

🏅 The Gold layer gives you data that is right for business. You can trust this data when making big choices.

Analytics and Reporting

The Gold layer helps you with analytics and reports. It works with dashboards and business reports. You can do advanced analytics like predicting sales or grouping customers. The Gold layer lets you check product quality and see how things are going. You get data that is ready for fast answers. People can look at views that fit what they need.

High-quality data ready for analytics

Works with dashboards and reports

Lets you do advanced analytics and predictions

Helps with grouping customers and guessing sales

Use Cases

The Gold layer is important for making decisions. You can turn lots of details into simple results. This helps your business grow. The Gold layer makes hard data easy to understand. You can watch how things change over time with snapshots and KPIs. The Gold layer helps you go from simple reports to deep analytics. This supports your business plans. When you use a strong Gold layer, your team can make smart choices.

📊 The Gold layer helps you see everything and make good decisions.

Comparing Layers in Data Pipelines

Comparison Table

The Bronze, Silver, and Gold layers are not the same. Each one handles data in a special way. The table below shows how they are different. You can see how each layer checks data, how fast it works, and who uses it.

Dimension | Bronze Layer | Silver Layer | Gold Layer |

|---|---|---|---|

Data quality | Minimal checks. You get basic completeness and schema-on-read. | Strong validations. You see type checks, deduplication, and outlier handling. | Certified quality. You find metric definitions, service level objectives (SLOs), and change control. |

Latency & freshness | Fastest ingest. You can land data in near real-time at the lowest cost. | Near real-time to hourly. The speed depends on how often upstream data arrives and SLAs. | Hourly to daily. Some dashboards update in real time. Releases follow strict rules for business needs. |

Access pattern | Rarely queried. You might use it for data science exploration. | Widely used by analytics and engineering teams. | Main source for business intelligence, self-serve marketing, and executive reports. |

📌 This table helps you see what each layer does best. Bronze starts with raw data. Gold ends with trusted data you can use right away.

Choosing the Right Layer

You should pick a layer that matches your goal. Each layer is good for a different job:

Bronze Layer: Use this if you want to keep raw data. It is good for audits or when you need to check where data came from.

Silver Layer: Pick this when you need clean data for reports or models. You can trust the data here for most analytics work.

Gold Layer: Choose this for business dashboards or sharing results. This layer gives you the best data for big decisions.

If you want new data fast, start with Bronze. If you need to share answers, use Gold. Silver is best when you want both speed and good data.

💡 Tip: Always use the layer that fits your needs. This saves you time and helps you avoid errors.

Pipeline Example: Bronze to Gold

Source and Requirements

You want to make a pipeline that turns raw data into business-ready information. First, you need to know where your data comes from and what your business needs. You might get data from sales systems, customer apps, or sensors. Each source has its own format and rules.

Here is a table that shows what you need for a pipeline that goes from Bronze to Gold:

Requirement/Data Source | Description |

|---|---|

High Quality and Usability | Data is fully cleaned, transformed, and aggregated for accuracy and relevance. |

Business-Ready Data | Structured for reporting, KPI tracking, machine learning, and business intelligence. |

Data Marts | May include specialized datasets for different business units like finance, sales, or operations. |

Governance | Requires agreement on shared definitions and important KPIs across divisions. |

Key Entities and Metrics | Must capture shared definitions of important entities and how KPIs are calculated. |

Everyone needs to agree on what things mean. For example, you must decide what counts as a "customer" or how to measure "sales." This helps keep reports clear and numbers correct.

📝 The gold layer should always use shared definitions for important things like customers and KPIs. This keeps your data stable and easy to trust.

Bronze Layer Setup

You begin by setting up the Bronze layer. This is where you collect all your raw data. You do not change the data here. You just store it safely so you can use it later.

Follow these steps to set up your Bronze layer:

Assess Data Characteristics: Look at your data. Is it in tables or messy like log files? This helps you pick the best way to store it.

Optimize for Scalability: Pick storage that can grow with your data. You can split data by time or by where it came from.

Manage Metadata: Use tools to track what each file means. Tools like AWS Glue or Apache Hive Metastore can help.

Implement Security Measures: Protect your data. Use encryption and control who can see or change it.

Plan Data Retention: Decide how long to keep each piece of data. Move old data to cheaper storage if you do not need it often.

🔒 Always keep your raw data safe and organized. This makes it easy to fix mistakes or check where your data came from.

Silver Layer Processing

After you collect your raw data, you move it to the Silver layer. Here, you clean and organize the data so it is ready for analysis. You want to make sure your data is correct and easy to use.

Here are the main steps you follow in the Silver layer:

Processing Step | Description |

|---|---|

Cleansing | Remove errors and fix wrong values. |

Deduplication | Get rid of records that show up more than once. |

Joining | Combine data from different sources to make a full picture. |

Transformations | Change data formats or structures to fit your needs. |

Monitoring Completeness | Check that you have all the data you need. |

Monitoring Uniqueness | Make sure important values are not repeated when they should be unique. |

You might join sales data with customer data to see who bought what. You also check for missing or strange values. You want your data to be neat and ready for deeper study.

💡 Cleaning and joining your data in the Silver layer helps you find answers faster and trust your results.

Gold Layer Output

The Gold layer is where your data becomes truly useful. You take the clean data from the Silver layer and make it ready for business use. You add extra details, create key metrics, and build reports.

You might make special datasets for different teams, like finance or sales. These are called data marts. You also make sure everyone uses the same rules for things like "total sales" or "active customers." This helps your company make good decisions.

You create dashboards and reports for leaders.

You build datasets for machine learning models.

You track important KPIs, like revenue or customer growth.

🏆 The Gold layer gives you trusted data for reports, dashboards, and smart business moves. You can answer questions quickly and with confidence.

When to Use Layered Data Pipelines

Ideal Scenarios

You should use layered Data Pipelines when you want to organize your data and make it easy to trust. Many companies use this approach for both batch and real-time processing. You can see some common scenarios in the table below:

Scenario Type | Description |

|---|---|

Batch processing | Use this when you need to create reports every week or month. Real-time results are not needed. |

Real-time processing | Choose this for instant insights, like catching fraud or watching sensors in real time. |

ETL vs. ELT | ETL changes data before storing it. ELT stores data first, then changes it later. |

Lambda and Kappa architectures | Lambda mixes batch and real-time jobs. Kappa focuses only on real-time data. |

You can build strong pipelines by making each part work on its own. This helps you fix problems faster. If your business needs quick answers, you should focus on real-time processing. Many teams use layered pipelines to keep their data clean and ready for any job.

💡 Tip: Pick the right scenario for your needs. You can mix batch and real-time steps to get the best results.

Limitations

Layered pipelines work well, but you may face some challenges. You need to watch out for issues that can slow you down or make your data less reliable. The table below shows some common limitations:

Limitation | Description |

|---|---|

Data quality assurance | You must keep your data correct. Bad data can lead to wrong answers. |

Data integration complexity | Combining data from many places can be hard and may cause delays. |

Data volume and scalability | As your data grows, you need systems that can handle more work. |

Data transformation | Raw data often needs a lot of cleaning before you can use it. |

Data security and privacy | You must protect private data and follow rules, which can slow down your work. |

Pipeline reliability | If your pipeline fails, it can stop your business and cost money. |

You should plan for these challenges before you start. Good design and regular checks help you avoid most problems. Always test your pipeline and keep your data safe.

⚠️ Note: No system is perfect. Stay alert for issues and fix them early to keep your data flowing smoothly.

Best Practices and Pitfalls

Design Tips

You can build strong Data Pipelines by following a few simple rules. Start by keeping each layer clear and separate. This helps you find problems fast. Use clear names for your tables and files. Good names make it easy for others to understand your work. Always write down your steps. Documentation helps your team know what happens at each stage.

Try to automate your checks. Set up tests that run every time new data arrives. This keeps your data clean. Use version control for your code and data models. You can track changes and fix mistakes quickly. Plan for growth. Pick tools and storage that can handle more data as your needs grow.

🛠️ Tip: Review your pipeline often. Small changes can make your system faster and safer.

Common Mistakes

Many people make the same mistakes when building layered pipelines. One common mistake is skipping the Bronze layer. If you do not keep raw data, you cannot go back and fix errors. Another mistake is mixing business logic into the Silver layer. Keep business rules in the Gold layer so you can update them without breaking other steps.

Some teams forget to monitor their pipelines. If you do not watch for errors, you may miss problems until it is too late. Others use too many tools or change tools too often. This can make your system hard to manage.

Mistake | How to Avoid It |

|---|---|

Skipping raw data storage | Always keep a copy of original data |

Mixing business logic too early | Save business rules for the Gold layer |

Poor monitoring | Set up alerts and regular checks |

Overcomplicating tool choices | Use simple, proven tools first |

⚠️ Note: Learn from these mistakes. Careful planning and regular checks help you avoid trouble.

Getting Started with Data Pipelines

Implementation Steps

You can make Data Pipelines by following easy steps. First, think about what you want to do. Decide what problems you hope to fix with your data. Next, find out where your data comes from. You might use files, databases, or cloud storage.

Here is a simple guide to help you start:

Define Your Objectives

Write down your goals. Maybe you want to make reports or watch sales.Identify Data Sources

List all the places your data comes from. You can use spreadsheets, APIs, or sensors.Design Your Pipeline Layers

Plan how you will use Bronze, Silver, and Gold layers. Decide what job each layer will have.Set Up Storage and Tools

Pick where you will keep your data. You can use cloud platforms or computers at your work.Build and Test Each Layer

Make each layer one at a time. Test your work to see if your data is right.Monitor and Improve

Watch your pipeline for mistakes. Change things to make it faster and better.

🏁 Start with just one layer. Add more as you learn new things.

Tools and Resources

There are many tools to help you build Data Pipelines. Some tools are good for people just starting. Others have more features for big jobs.

Tool Name | Use Case | Skill Level |

|---|---|---|

Apache Spark | Data processing | Intermediate |

Azure Data Factory | Cloud pipeline orchestration | Beginner |

Databricks | Unified analytics | Intermediate |

AWS Glue | Data integration | Beginner |

Airflow | Workflow scheduling | Intermediate |

You can find guides and lessons online. Many cloud companies have free classes. Try small projects to get better at using these tools.

💡 Tip: Choose tools that fit what you know and need. You can try new tools as your project gets bigger.

You get lots of good things when you use Bronze, Silver, and Gold layers in your data pipelines. The table below shows what companies have done:

Advantage | Description |

|---|---|

Clear organization | Every step has its own job. |

Growing quality | Data goes from raw to trusted, then to important. |

Better BI performance | Dashboards work faster and do not break as much. |

Governance | You can see where data came from, which helps with checks. |

First, look at your pipelines now. Try making a small project using this setup. You will notice better results and trust your data more.

FAQ

What is the main purpose of the Bronze, Silver, and Gold layers?

You use these layers to organize your data. Each layer helps you clean, check, and prepare data for reports. This makes your data easier to trust and use.

How do I know which layer to use for my project?

You choose a layer based on your goal. Use Bronze for raw data, Silver for cleaned data, and Gold for business reports. Pick the layer that matches your needs.

Can I skip a layer in my data pipeline?

You should not skip layers. Each layer has a job. Skipping one can make your data less reliable. You get better results when you use all three layers.

What tools help me build layered data pipelines?

You can use tools like Databricks, Apache Spark, or Azure Data Factory. These tools help you move, clean, and organize your data in each layer.

How do layered pipelines improve data quality?

Layered pipelines let you check and fix data at every step. You catch mistakes early. You keep your data clean and ready for analysis.

See Also

Key Steps and Practices for Creating a Data Pipeline

An Introductory Guide to Understanding Data Pipelines

A Comprehensive Overview of Cloud Data Architecture